![]() This is the third in a series of posts where I interview XACML vendors. This time we talk to NextLabs.

This is the third in a series of posts where I interview XACML vendors. This time we talk to NextLabs.

Why does the world need XACML? What benefits do your customers realize?

Over the last 20 years IT has focused on building walls around their networks and applications. Now with cross-organizational collaboration, cloud and mobile we are finding that those walls are no longer relevant for protecting critical information.

The world needs XACML to protect critical information in today’s collaborative business and IT environment.

At NextLabs we focus on applying Extensible Access Control Markup Language (XACML) to information protection to enable our customers to accelerate global collaboration while simultaneously protecting their most sensitive intellectual property.

Using Attribute-Based Access Control (ABAC) and externalized authorization we can protect data based on its sensitivity, defined by attributes, across applications and systems. Traditional access control models such as Role-Based Access Control (RBAC) and Access Control Lists (ACLs) simply do not scale to address the information protection problem.

What products do you have in the XACML space?

NextLabs has taken an industry-solution approach to the market. We provide several industry-solutions for regulatory compliance, secure partner collaboration, and intellectual property protection.

Each solution is comprised of pre-built policy libraries that implement industry best-practices, pre-built policy-enforcement-points (PEPs) for critical enterprise applications, our Control Center Information Control Platform based on XACML, and pre-built reporting.

Control Center is our Information Control Platform. It has several components:

- Control Center Server – the Control Center server includes our Policy Administration Point (PAP) and additional services necessary for information control use cases. These include:

- Information Classification Services – a compressive set of services that automate information classification such as content-analysis, data tagging, and user driven classification

- Policy Development and Lifecycle Management Services – Services to govern and simplify the development and management of policy such as delegated administration, approval workflow, testing and validation, audit trail, versioning, and dictionary services. On top of this we provide Policy Studio, a graphical policy integrated development environment (IDE)

- Policy Deployment and PDP Management Services – services that allow us to reliably deploy policies to distributed PDPs, even over the public internet

- Audit and Reporting Services – role-based dashboards, analytics, and reporting to provide insights into information activity and policy compliance

- Control Center Policy Controller – the Policy Controller is our policy-decision-point (PDP). We provide three different editions of the Policy Controller:

- Endpoint Policy Controller – designed to run on laptops and desktops, even when disconnected

- Server Policy Controller – designed to run co-located with a server based applications. Can be run as a service/daemon or embedded into an application

- Policy Controller Service – designed to run as a stand-alone PDP service in J2EE Application Server

NextLabs provides over a dozen pre-built Policy Enforcement Points (PEPs) for common applications and system. These are separated into three product lines:

- Entitlement Management – pre-built PEPs for server applications, including:

- Document Management (Microsoft SharePoint, SAP Document Management)

- SAP Enterprise Resource Planning

- Product Lifecycle Management (SAP PLM, Dassault Enovia)

- Collaboration (CIFS and NFS File Servers)

- Collaborative Rights Management – Collaborative Rights Management (cRM) applies XACML to protect unstructured data (files)

- Data Protection – Data Protection is a suite of endpoint PEPs for removable devices, networking, email applications, web meeting applications and unified communication applications

What versions of the spec do you support? What optional parts? What profiles?

We support the core 2.0 and 3.0 specifications as well as the SAML, EC-US and IPC profiles.

What sets your product apart from the competition?

At NextLabs we differentiate ourselves through comprehensive industry solutions and our focus on information protection.

XACML is a generic authorization standard and can be applied to many things. Making it useful to the business buyer requires significant work beyond the standard – resources need attributes (i.e. information needs to be classified), PEPs need to be built, obligations/advise need to be implemented and policies need to be designed, developed and tested.

We have addressed this solution gap to make XACML useful for protecting critical information, and that’s what sets us apart.

What customers use your product? What is your biggest deployment?

NextLabs works with leading companies in the Manufacturing, High-Tech, Aerospace and Defense, Chemical, Energy, and Industrial Equipment industries. These companies typically have very high-value or sensitive intellectual property, global operations and are subject to strict global regulations.

We have multiple deployments above 50,000 users and have a project that will soon reach 100,000 users.

We have a few webinars where you can hear how some of our customers like GE and Tyco benefitted from our solutions. Recently one of our customers, BAE Systems, was recognized by CIO magazine for their use of our product.

What programming languages do you support? Will you support the REST profile? And JSON?

We support Java, C#, C++, SOAP, and SAP ABAP. We plan to support the REST and JSON profiles in a future release.

Do you support OpenAz? Spring-Security? Other open source efforts?

NextLabs contributed the C++ implementation of OpenAz and also supports OpenAz in Java.

We are committed to open APIs for authorization since this is critical to the growth of the XACML market and will support any effort that moves the industry forward in this regard.

How easy is it to write a PEP for your product? And a PIP? How long does an implementation of your product usually take?

NextLabs provides over a dozen PEP products and pre-built PIP integrations, which eliminate the need to build PEPs or PIPs for many common commercial applications.

For a custom PEP/PIPs, the time required depends on the nature of the application and the use case you are trying to support. The time can vary from hours to weeks.

Installing the product only takes hours, but the time required to implement a solution to production will vary depending on the number and type of applications and the policy use cases.

Can your product be embedded (i.e. run in-process)?

Yes, our Policy Controller can be embedded into another application.

What optimizations have you made? Can you share performance numbers?

Any latency introduced by external queries to information points (PIP) and evaluating large numbers of policy is a concerns for all customers.

We designed our architecture with the principle of a PDP that can run completely off-line – with the ability to make complex decisions without any network calls. This was a critical requirement for our endpoint products and has the benefit of eliminating latency associated with network roundtrips or external queries to PIPs.

To enable our off-line PDP we developed a patented policy deployment technology, called ICENet, which pre-evaluates multiple dimensions of policy when it is deployed to distributed PDPs.

99% of our policy queries are under 5 milliseconds, with most of those under 1 millisecond.

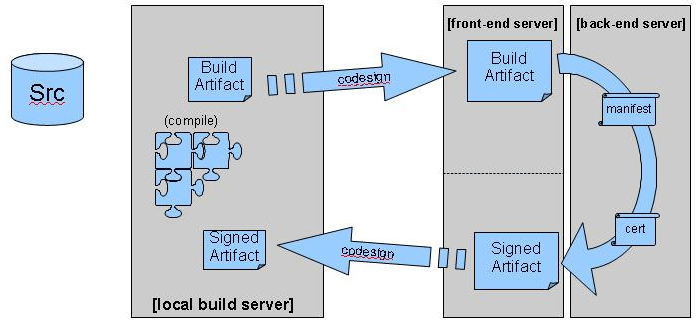

One of the important things in a

One of the important things in a  The process outlined above works well for making our software ever more secure.

The process outlined above works well for making our software ever more secure. Some people object to the Zero Defects mentality, claiming that it’s unrealistic.

Some people object to the Zero Defects mentality, claiming that it’s unrealistic. In a previous post, we discussed

In a previous post, we discussed  This is where

This is where

We can alleviate this problem by

We can alleviate this problem by  In a previous post, I explained how to

In a previous post, I explained how to

One thing we can do by measuring code coverage on acceptance tests, is find dead code.

One thing we can do by measuring code coverage on acceptance tests, is find dead code. This is not as it should be according to the

This is not as it should be according to the

He checks the accounting software and discovers that one account’s computer time is not paid for.

He checks the accounting software and discovers that one account’s computer time is not paid for.

The attacker used this to install his own copy of the

The attacker used this to install his own copy of the

Mobile code in source form requires an interpreter to execute, like

Mobile code in source form requires an interpreter to execute, like  Examples of active content are HTML pages or

Examples of active content are HTML pages or  A simple bug that causes the mobile code to go into an infinite loop will threaten your application’s

A simple bug that causes the mobile code to go into an infinite loop will threaten your application’s  After restricting what mobile code may run at all, we should take the next step: prevent the running code from doing harm by restricting what it can do.

After restricting what mobile code may run at all, we should take the next step: prevent the running code from doing harm by restricting what it can do. A

A  Any piece of data that is marked as a record, may not be deleted until after the end of the retention period (at which point it must be deleted).

Any piece of data that is marked as a record, may not be deleted until after the end of the retention period (at which point it must be deleted).

We’re in the process of

We’re in the process of  Content negotiation is not just a convenience feature, however. It also facilitates evolution.

Content negotiation is not just a convenience feature, however. It also facilitates evolution. The longer answer is to make an abstraction for the version/format combination. We’ll dub this abstraction a representation.

The longer answer is to make an abstraction for the version/format combination. We’ll dub this abstraction a representation. The

The

Lots of courses are being offered, many of them conveniently online. One great (and free) example is

Lots of courses are being offered, many of them conveniently online. One great (and free) example is  You can organize that a bit better by doing something like job rotation. Good forms of job rotation for developers are

You can organize that a bit better by doing something like job rotation. Good forms of job rotation for developers are

There are many ways of testing software. This post uses the

There are many ways of testing software. This post uses the

You must be logged in to post a comment.